| Automatic

Speech Recognition (speech to text):

extract text from an audio or video file, with automatic language detection,

automatic punctuation, and word-level timestamps, in 100 languages.

|

We use OpenAI's Whisper Large model. |

Playground >>

|

| Classification:

send a piece of text, and let the AI apply the

right categories to your text, in many languages. As an option, you can suggest the

potential categories you want to assess. |

We use GPT-OSS 120B, LLaMA 3.1 405B and an in-house NLP Cloud model called Fine-tuned LLaMA 3.3 70B. We

also use the Bart Large MNLI Yahoo Answers and XLM Roberta Large XNLI by

Joe Davison. |

Playground >>

|

| Chatbot/Conversational

AI: discuss fluently with an AI and get relevant answers, in many

languages.

|

We use GPT-OSS 120B, LLaMA 3.1 405B and in-house NLP Cloud models called ChatDolphin, and Fine-tuned

LLaMA 3.3 70B. We

also use Dolphin Yi 34B by Eric

Hartford, and Dolphin Mixtral 8x7B

by Eric Hartford. |

Playground

>>

|

| Code

generation: generate source code out of a simple instruction, in

any programming language.

|

We use GPT-OSS 120B, LLaMA 3.1 405B and in-house NLP Cloud models called ChatDolphin, and Fine-tuned LLaMA 3

70B. We

also use Dolphin Yi 34B by Eric Hartford, and Dolphin Mixtral 8x7B

by Eric Hartford. |

Playground >>

|

| Dialogue

Summarization: summarize a conversation, in many languages |

We use Bart Large CNN SamSum by Philipp Schmid. |

Playground >>

|

| Embeddings:

calculate embeddings in more than 50 languages. |

We use several Sentence Transformers models like Paraphrase Multilingual Mpnet

Base V2. |

|

| Grammar

and spelling correction: send a block of text and let the AI

correct

the mistakes for you, in many languages. |

We use GPT-OSS 120B, LLaMA 3.1 405B and in-house NLP Cloud models called ChatDolphin, and Fine-tuned LLaMA 3

70B. We

also use Dolphin Yi 34B by Eric Hartford, and Dolphin Mixtral 8x7B

by Eric Hartford. |

Playground

>>

|

| Headline

generation: send a text, and

get a very short summary suited for headlines, in many languages |

We use T5 Base EN Generate Headline by Michal Pleban. |

Playground >>

|

| Intent

Classification: understand the intent from a piece of text, in

many

languages.

|

We use GPT-OSS 120B, LLaMA 3.1 405B and in-house NLP Cloud models called ChatDolphin, and Fine-tuned LLaMA 3

70B. We

also use Dolphin Yi 34B by Eric Hartford, and Dolphin Mixtral 8x7B

by Eric Hartford. |

Playground >>

|

| Keywords

and keyphrases extraction:extract the main keywords from a piece

of

text, in many languages. |

We use GPT-OSS 120B, LLaMA 3.1 405B and an in-house NLP Cloud model called and Fine-tuned LLaMA 3.3 70B. |

Playground

>>

|

| Language

Detection: detect one or several languages from a text. |

We use Python's LangDetect library.

|

Playground >>

|

| Lemmatization:

extract lemmas from a text, in many languages

|

All the large spaCy

models are available. |

|

| Named

Entity

Recognition (NER): extract structured information from an

unstructured text,

like names, companies, countries, job titles... in many languages. |

We use GPT-OSS 120B, LLaMA 3.1 405B and an in-house NLP Cloud model called Fine-tuned LLaMA 3.3 70B. We

also use all the large spaCy

models. |

Playground >>

|

| Noun Chunks:

extract noun chunks from a text, in many languages

|

All the large spaCy

models are available. |

|

| Paraphrasing

and rewriting:

generate a similar content with the same meaning, in many languages. |

We use GPT-OSS 120B, LLaMA 3.1 405B and an in-house NLP Cloud model called Fine-tuned LLaMA 3.3 70B. |

Playground >>

|

| Part-Of-Speech (POS)

tagging: assign parts of speech to each word of your text, in many

languages

|

All the large spaCy

models are available. |

|

| Question

answering: ask questions about

anything, in many languages. As an option you can give a context so the AI uses this

context to answer your question. |

We use GPT-OSS 120B, LLaMA 3.1 405B and in-house NLP Cloud models called ChatDolphin, and Fine-tuned LLaMA 3

70B. We also use Roberta Base Squad 2 by Deepset,

Dolphin Yi 34B by Eric Hartford, and Dolphin Mixtral 8x7B

by Eric Hartford.

|

Playground >>

|

| Semantic

Search: search your own data, in more than 50 languages. |

Create your own semantic search / RAG model out of your own domain knowledge

(internal documentation,

contracts...) and ask semantic

questions on it. |

Playground >>

|

| Semantic

Similarity: detect whether 2 pieces of text have the same meaning

or

not, in more than 50 languages. |

We use Paraphrase Multilingual Mpnet Base V2. |

Playground >>

|

| Sentiment

and emotion analysis: determine sentiments and emotions from a

text

(positive, negative, fear, joy...), in

many languages. We also have an AI for financial

sentiment analysis. |

We use DistilBERT Base Uncased Finetuned SST-2, DistilBERT Base Uncased

Emotion, and Finbert by Prosus AI. |

Playground >>

|

| Speech Synthesis (Text-To-Speech):

convert text to audio |

We use Speech T5 by Microsoft. |

Playground >>

|

| Summarization: send a text, and

get a smaller text keeping essential information

only, in many languages |

We use GPT-OSS 120B, LLaMA 3.1 405B and in-house NLP Cloud models called ChatDolphin, and Fine-tuned LLaMA 3

70B. We also use Bart Large CNN by Meta,

Dolphin Yi 34B by Eric Hartford, and Dolphin Mixtral 8x7B

by Eric Hartford. |

Playground >>

|

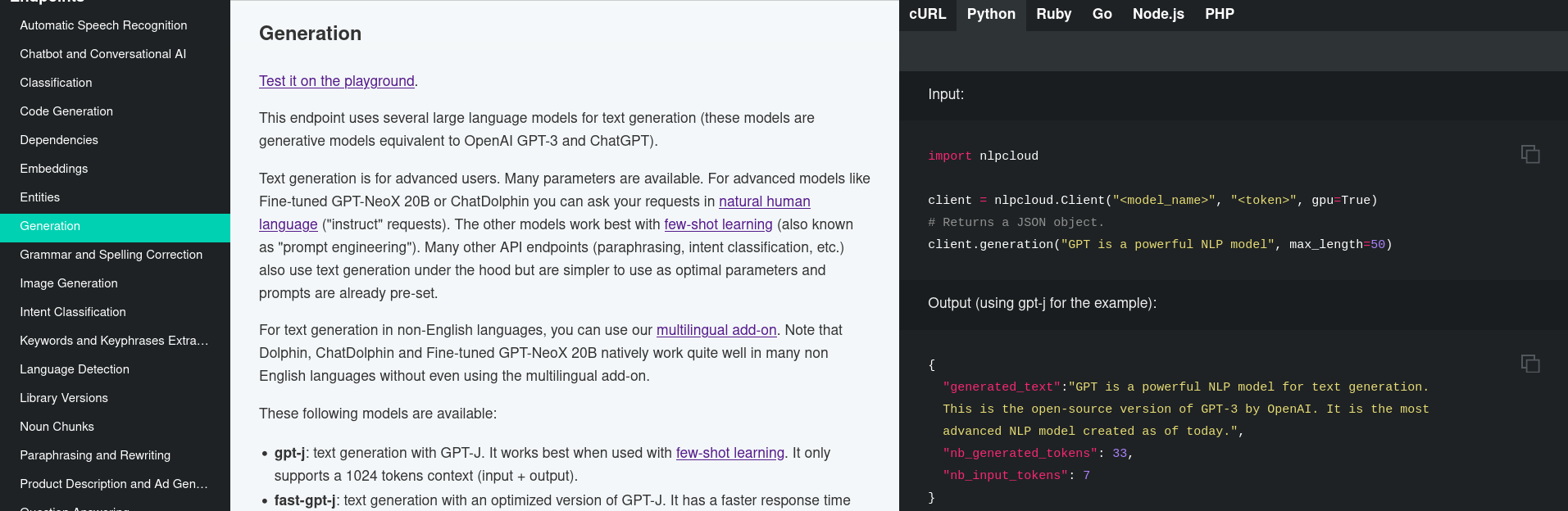

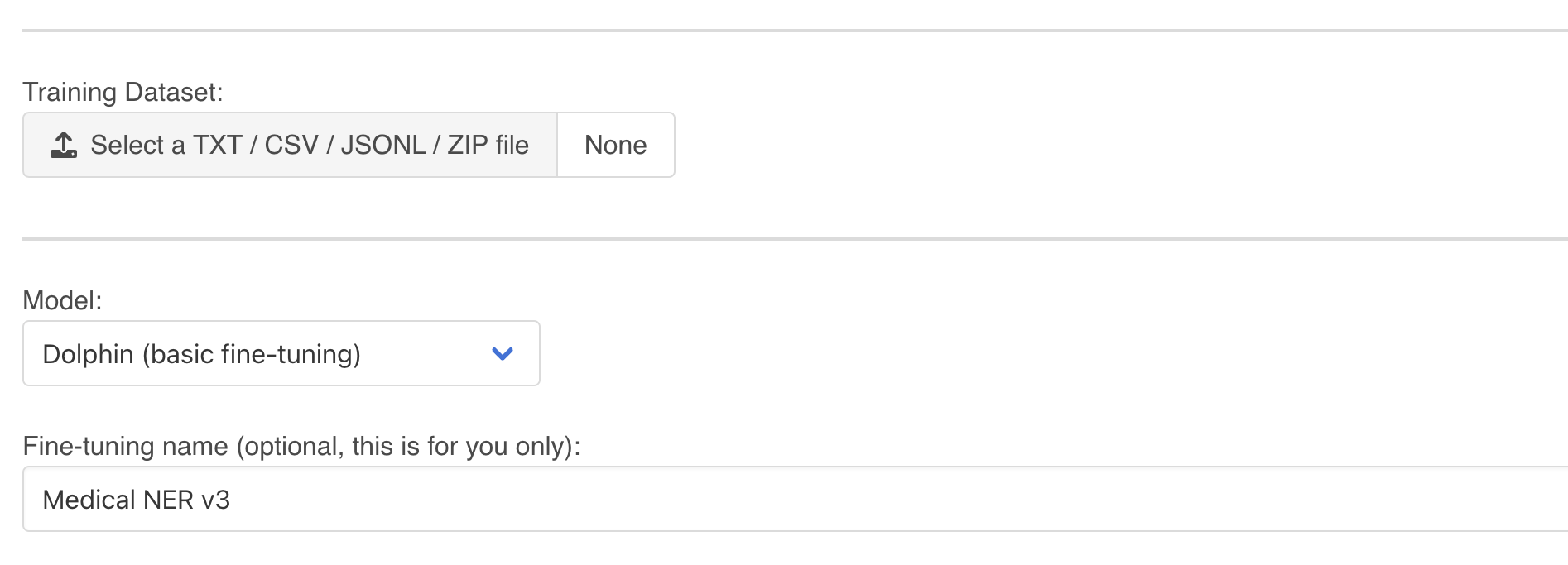

| Text

generation: achieve all the most advanced AI use cases by either

making requests

in natural language ("instruct" requests) or using few-shot

learning.

|

We use GPT-OSS 120B, LLaMA 3.1 405B and an in-house NLP Cloud model called ChatDolphin and Fine-tuned LLaMA 3.3 70B. We

also use Dolphin Yi 34B by Eric

Hartford, and Dolphin Mixtral 8x7B

by Eric Hartford.

You can also fine-tune your own text generation model for even better results.

|

Playground >>

|

| Tokenization:

extract tokens from a text, in many languages

|

All the large spaCy

models are available. |

|

| Translation: translate

text in 200 languages with automatic input language detection. |

We use NLLB 200 3.3B by Meta for translation in 200 languages. |

Playground >>

|