Pricing: Hugging Face VS NLP Cloud

First, it's worth noting that the NLP Cloud API can be tested for free when used on a CPU and a GPU (thanks to the free plan and the pay-as-you-go plan that offers 100k free tokens), while the Hugging Face API can only be tested for free on a CPU (thanks to their free plan). It is an important difference since the most interesting Transformer-based AI models run much faster on a GPU. Some even just don't run on a GPU.

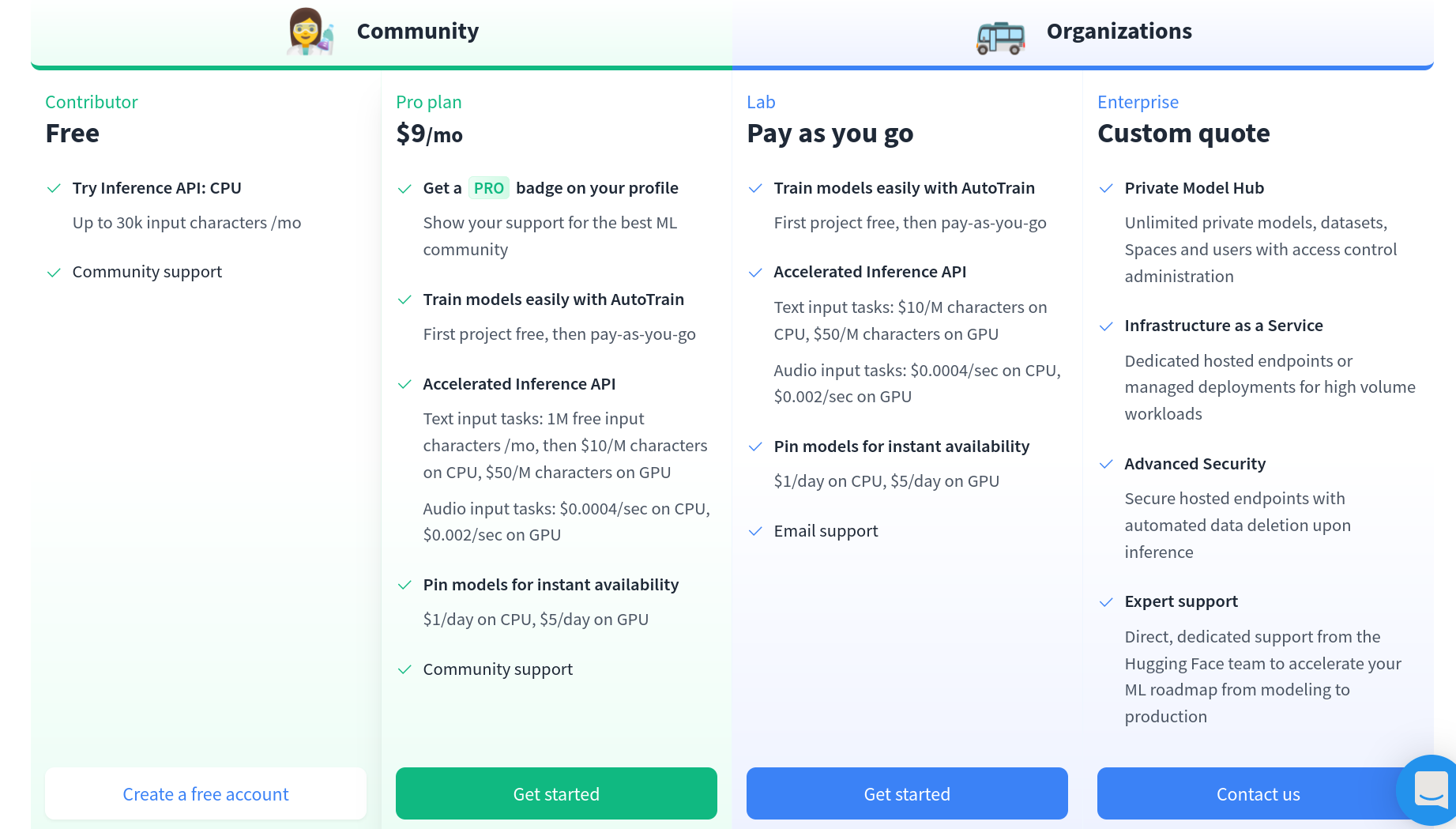

Hugging Face Pricing

In terms of plans, Hugging Face only proposes pay-as-you-go pricing (pricing based on your consumption) while NLP Cloud both proposes pre-paid plans and pay-as-you-go plans. Let's say you want to perform text classification on pieces of text that contain around 5k words on average, at a rate of 15 requests per minute, on a GPU. Hugging Face's pricing is based on the number of characters, while NLP Cloud's one is based on the number of tokens. 5k words are more or less equivalent to 15k characters and to 3,750 tokens. On NLP Cloud it will cost you $99/month by subscribing to the Starter GPU Plan, while on Hugging Face it will cost you 15k x 15 x 60 x 24 x 31 x $50 / 1M = $500k/month (!!!).

As you can see, it seems that the Hugging Face pay-as-you-go pricing is absolutely not suited for a production use. Literally no-one is going to pay such a price for text classification on a GPU...

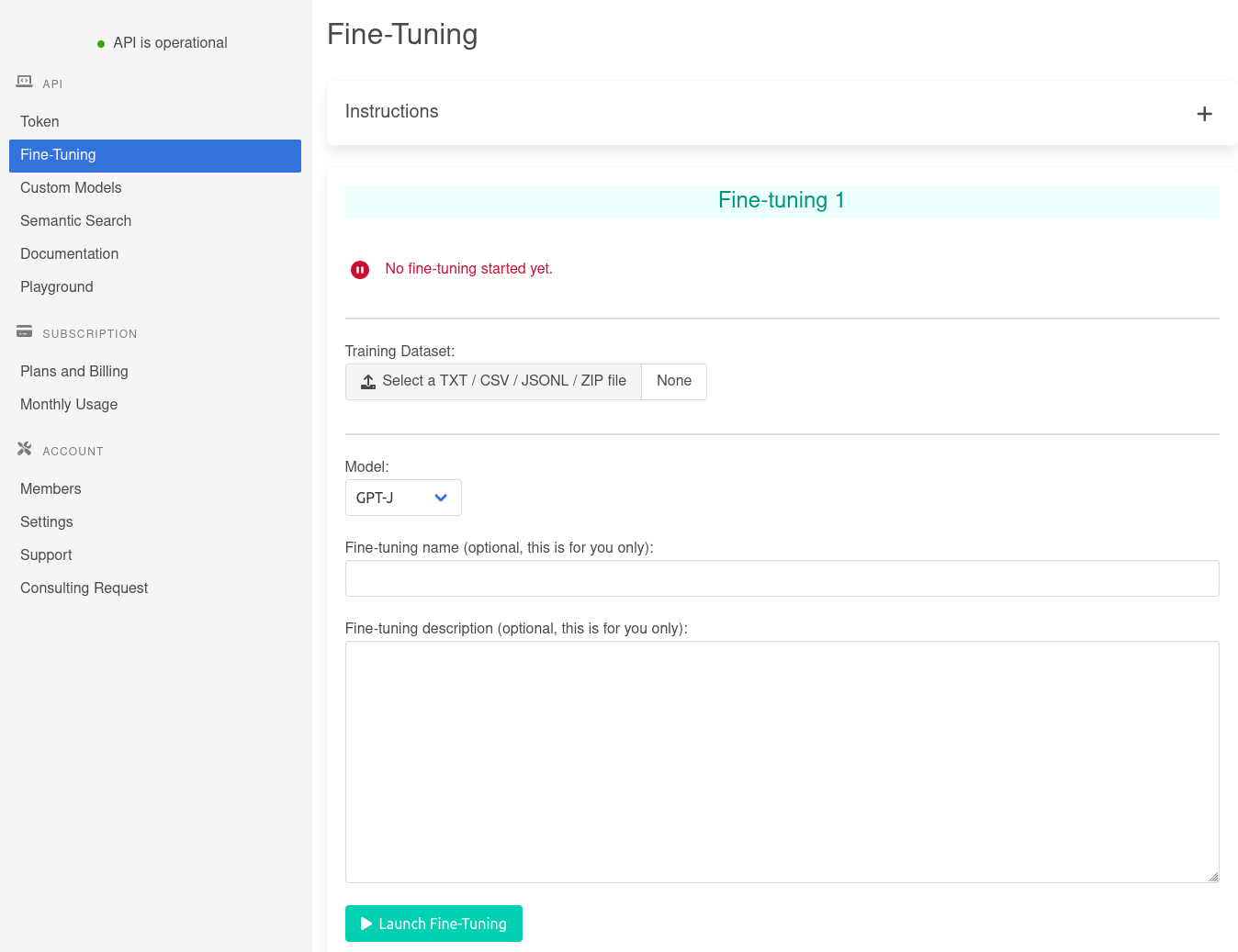

As far as fine-tuning is concerned, it is not even possible to compare as Hugging Face's AutoTrain pricing is not public. We registered and tried their AutoTrain solution, but we were still unable to find any clear pricing...